1: Duke University, Department of Biomedical Engineering

2: Duke University, Department of Electrical and Computer Engineering

3: Google Research, Applied Science Team

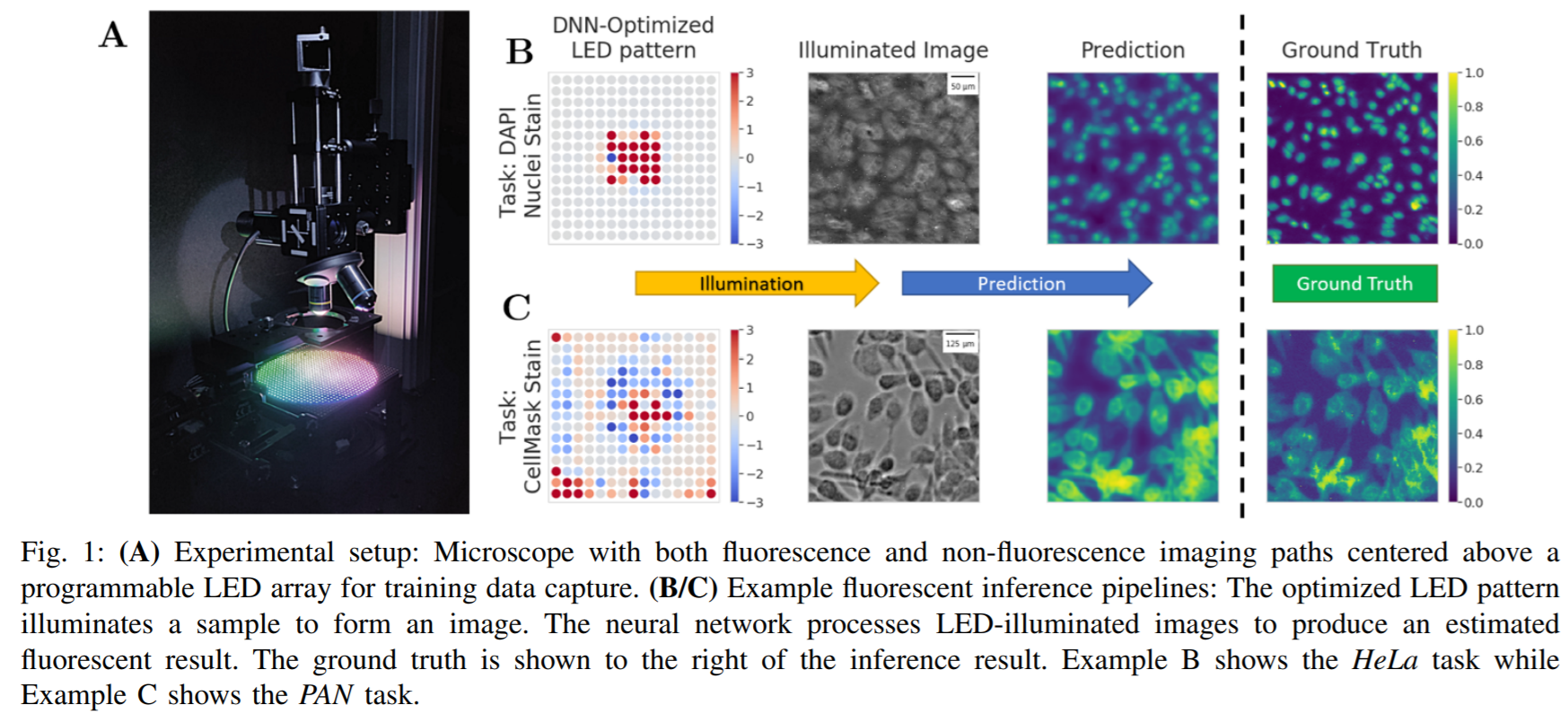

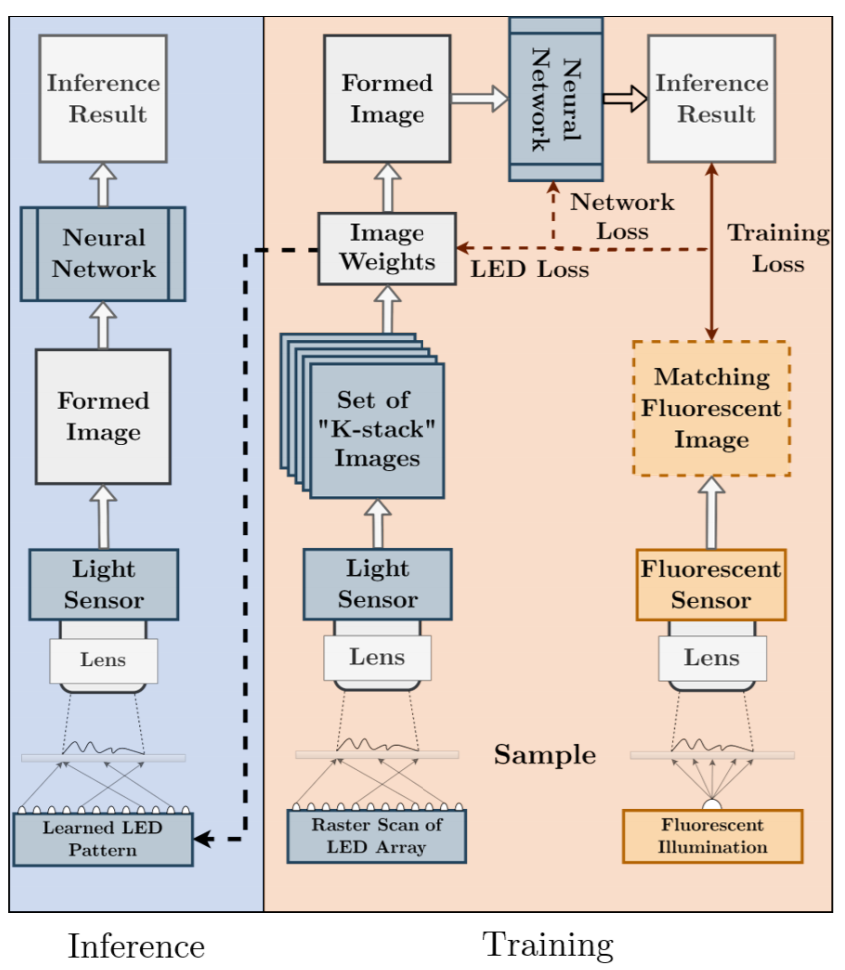

This paper introduces a new method of data-driven microscope design for virtual fluorescence microscopy. Our results show that by including a model of illumination within the first layers of a deep convolutional neural network, it is possible to learn task-specific LED patterns that substantially improve the ability to infer fluorescence image information from unstained transmission microscopy images. We validated our method on two different experimental setups, with different magnifications and different sample types, to show a consistent improvement in performance as compared to conventional illumination methods. Additionally, to understand the importance of learned illumination on inference task, we varied the dynamic range of the fluorescent image targets (from one to seven bits), and showed that the margin of improvement for learned patterns increased with the information content of the target. This work demonstrates the power of programmable optical elements at enabling better machine learning algorithm performance and at providing physical insight into next generation of machine-controlled imaging systems.

Our results show a novel method for developing image capture systems specifically optimized for deep learning based processing. By placing the physical parameters of the microscope in the gradient pathway, we jointly optimized the way an image was captured with the way it was processed. This allows imaging systems to sample data, not based on human preferences, but governed by optimization.

Our experimental results show that the physical parameters of a microscope play an important role in deep learning imageto-image inference systems. By allowing the joint optimization of illumination and image processing we achieve consistently better performance than all tested alternatives. We hope our results continue to motivate the imaging and machine learning community to re-examine how they capture data and continue to develop understanding of the connection between data capture and data processing.